New release of COUNTER 5 brings new metrics

The library and publisher communities work together to establish a common set of standards and best practices around how data should be collected, through Project COUNTER. This allows librarians to compare data from different publishers and vendors, knowing that they aren’t comparing apples with pears.

At the start of 2019, Release 5 was rolled out as the current Code of Practice. This new Code of Practice introduced a new structure and a new set of metrics. One year on, we take a look at what changed in COUNTER 5, and what to bear in mind if you’re currently assessing the first full year of metrics using the new reporting parameters.

What’s changed?

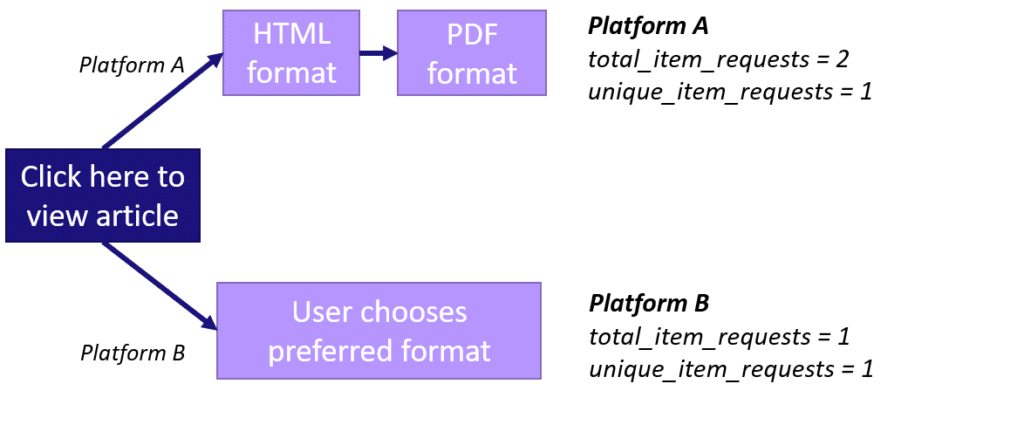

Duplications and inconsistencies in counting across different platform journeys have been addressed in Release 5 with new metrics of total_item_requests and unique_item_requests.

Total_item_requests: Counts all article full-content views across all formats like HTML and PDF. This metric is comparable to the current Release 4 usage count.

Unique_item_requests: Counts unique article full-content views regardless of format. If a user views an article PDF and HTML in the same session this would only count as 1.

The new Release 5 total_item_request is comparable to the previous Release 4 metric, however, the new unique item addresses inconsistency across platforms and makes the data more comparable, as it counts the unique views regardless of format within the session.

How will you report?

- Will you use total_item_requests because it is most comparable with release 4?

- Will you start to use unique_item_requests, which is more comparable across publishers?

- Or will you use both?

All these options have their merits. For further information, FAQs on the Counter 5 metrics and more on how you can benefit from more transparent reporting visit Taylor & Francis Online.